Hi,

I use oscilloscope to measure a 1000Hz 6Vpp AC signal. I observed different traces shown on the oscilloscope screen which is interesting.

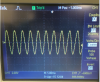

When sec/div is 1ms,

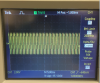

When sec/div is 5ms,

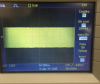

When sec/div is 100ms,

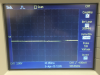

When sec/div is 250ms.

When the sec/div is 50s,

Observation:

1.As you can see, as sec/div changed from 1ms to 5ms, the screen showed more cycles, which is the common sense.

2.When the sec/div is increased to 100ms, more cycles were shown on the screen, but the oscilloscope changes to scan mode.

3.But when the sec/div is increased to 250ms, the oscilloscope couldn't show the true input signal.

4.As the sec/div increased to 50s, what the oscilloscope shown is the same amplitude with the input signal but different frequency.

I have two questions,

1. when the sec/div is increased to certain value, why the oscilloscope change to scan mode?

2. what's the reason of above observation 3 and 4?

Thanks.

I use oscilloscope to measure a 1000Hz 6Vpp AC signal. I observed different traces shown on the oscilloscope screen which is interesting.

When sec/div is 1ms,

When sec/div is 5ms,

When sec/div is 100ms,

When sec/div is 250ms.

When the sec/div is 50s,

Observation:

1.As you can see, as sec/div changed from 1ms to 5ms, the screen showed more cycles, which is the common sense.

2.When the sec/div is increased to 100ms, more cycles were shown on the screen, but the oscilloscope changes to scan mode.

3.But when the sec/div is increased to 250ms, the oscilloscope couldn't show the true input signal.

4.As the sec/div increased to 50s, what the oscilloscope shown is the same amplitude with the input signal but different frequency.

I have two questions,

1. when the sec/div is increased to certain value, why the oscilloscope change to scan mode?

2. what's the reason of above observation 3 and 4?

Thanks.